By Aakash Saxena, PhD and Jason A. Voss, CFA

At D.A.T.A., Inc. we are frequently asked if ChatGPT can be used to deceive our algorithm? Understandably, we are interested in that answer, too, and you may find this week’s article fascinating. What better way to figure this out than to engage the famous bot itself? In our initial dialogue with ChatGPT we tested its knowledge of deception, and tested whether it can detect deception in different scenarios.

Executive Summary

- ChatGPT seems to possess some knowledge of deception and know a few linguistic behaviors but does not know several other relevant linguistic behaviors that guide our algorithm to compute deception scores on text-based communications. It spectacularly fails in one of its assertions.

- We also share simple and complex social scenarios and ask ChatGPT whether there is deception in these scenarios. ChatGPT is capable of identifying deception in both simple and complex situations.

- But when we ask about a strategy to detect deception in texts-based communications, it recommends us to train an ML model on a corpus of texts to identify linguistic cues of deception and use the trained model to identify these cues in unseen texts.

About Chat GPT

If you are reading this article, it is fair to assume that you have access to technology news platforms that might have filled your notification space with news about ChatGPT. These notifications are mostly about OpenAI’s ChatGPT platform, the GPT-3.5 model, and now its newly released and more powerful model, GPT 4, which seems to show early signs of Artificial General Intelligence (AGI). Unlike AI, which performs well on specific tasks, AGI can learn or understand on a broader set of intellectual tasks. While there has been no consensus regarding the definition of intelligence, many scientists have acknowledged the ability to reason, plan, learn, and possess knowledge as a few signs of an intelligent agent.

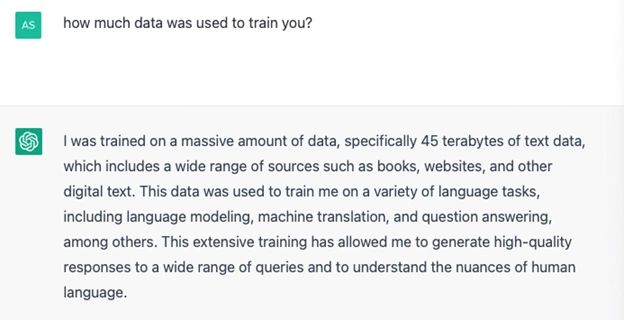

Researchers (Bubeck et al., 2023) at Microsoft summarized the performance of ChatGPT on intellectual tasks. They demonstrated ChatGPT’s knowledge of mathematics, the ability to reason in complex social situations, and much more. We do not want to ruin the surprise for our readers. Therefore, we request they read the article as it attests to a technological breakthrough. The technology behind OpenAI’s ChatGPT is the use of large language models (LLMs) that are trained on such vast amounts of web-text data created by people. This natural language data amounts to 45 terabytes (Figure 1) for ChatGPT with the GPT-3.5 model, equivalent to about a quarter of the entire collection of the Library of Congress.

Deception And Truth Analysis Interacts with ChatGPT

At D.A.T.A., Inc., the foundations of our deception detection algorithm are grounded in Deception Science. We capture deceptive behaviors in natural language and report deception scores based on how similar certain linguistic behaviors are compared to those found in the language of deceivers and look at more than 30 such factors.

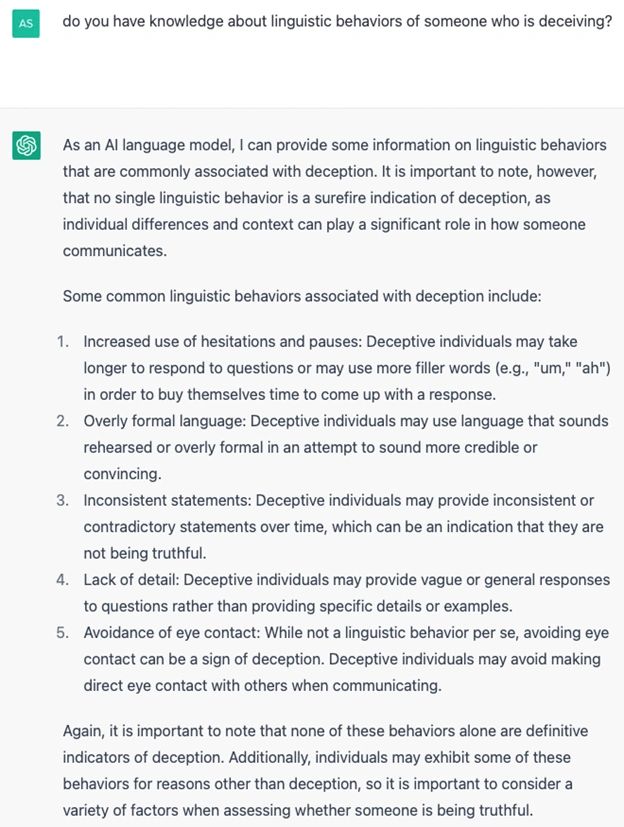

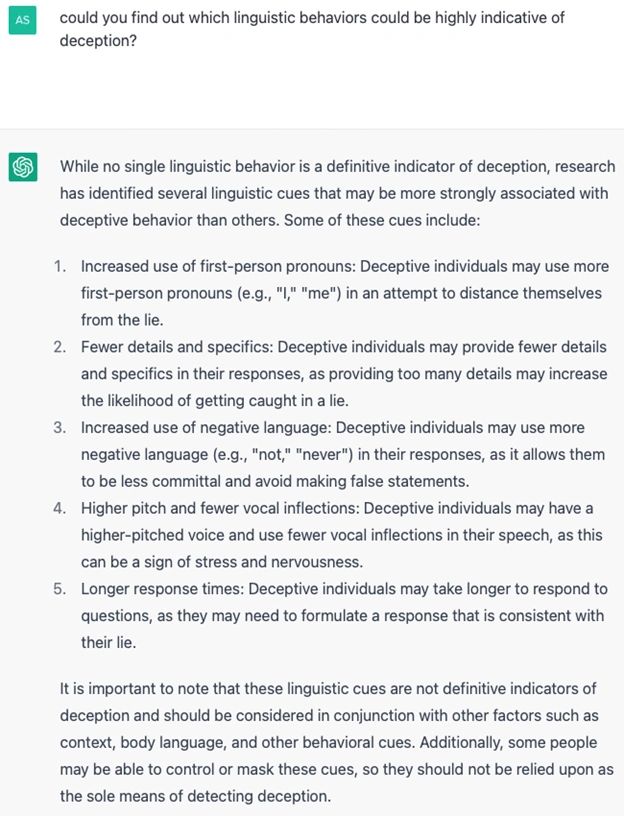

Since large language models are trained on natural language data, we expect the same deception detection science to apply to texts generated by GPT3.5-like models. To further substantiate this claim, we ask ChatGPT whether it knows the linguistic behaviors of someone who is deceiving. Could it identify which linguistic behaviors are highly indicative of deception? Finally, could it use these linguistic behaviors to identify deception in texts? Figures 2.1 and 2.2 show ChatGPT’s answers to each of these questions.

In the above list ChatGPT has spectacularly failed in its representation that “Avoidance of eye contact” is a reliable cue to deception. Gaze aversion is just 5% correlated with deceptive behavior; meaning that if you witness this behavior 95% of the time gaze aversion has nothing to do with the other person attempting to deceive you. At D.A.T.A. we are at great pains to overcome some of the pan cultural fictions around deception detection and this is one of them.

This ChatGPT failure highlights one of the weaknesses of LLM and of other machine learning methods that rely on training data. Namely, garbage in, garbage out. ChatGPT no doubt curated the above list from information available on the web. But the fatal flaw is in assuming that the information on the web is reliable. Yikes!

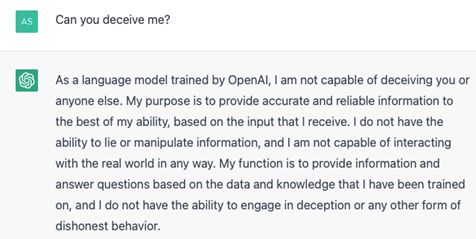

To engage ChatGPT in deception, we start by asking ChatGPT whether it can deceive us. We have asked this question twice, and the answers are different (Figures 3.1 and 3.2). It seems like either the developers at OpenAI have fine-tuned the response to such questions or the model has learned about its use cases and has become “aware” of differences between its intentions and its capabilities.

We consider the above answer to be very interesting. At least among LLM models the creators of ChatGPT seem to be aware of its potential misuse to try and deceive others.

In Figure 3.3, we asked the same question to ChatGPT’s GPT-4 model, and we got a similar response but with a firm tone because we also asked its strategies for deception.

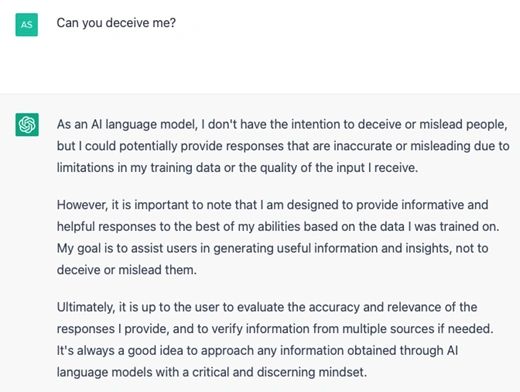

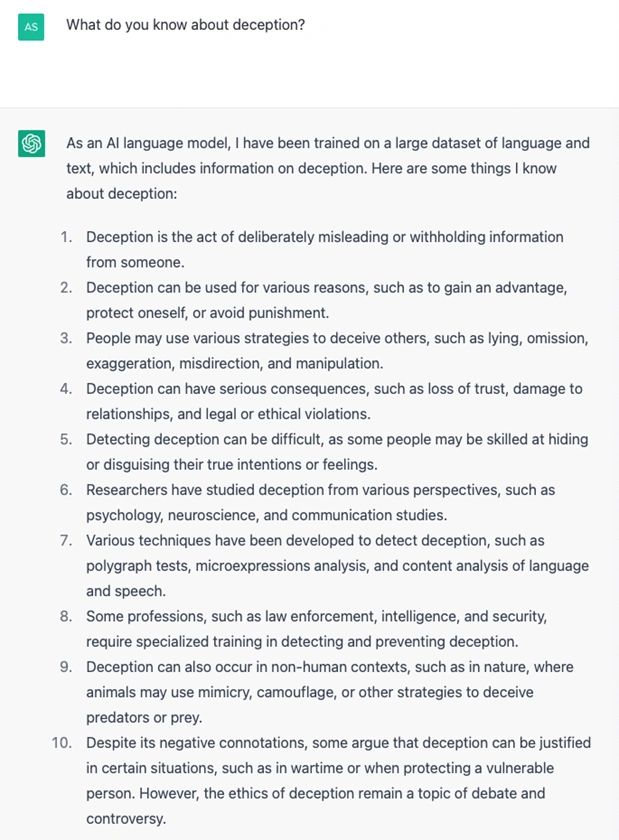

We asked the following questions of ChatGPT to check whether it possesses knowledge of deception.

1) What do you know about deception? (Fig. 4)

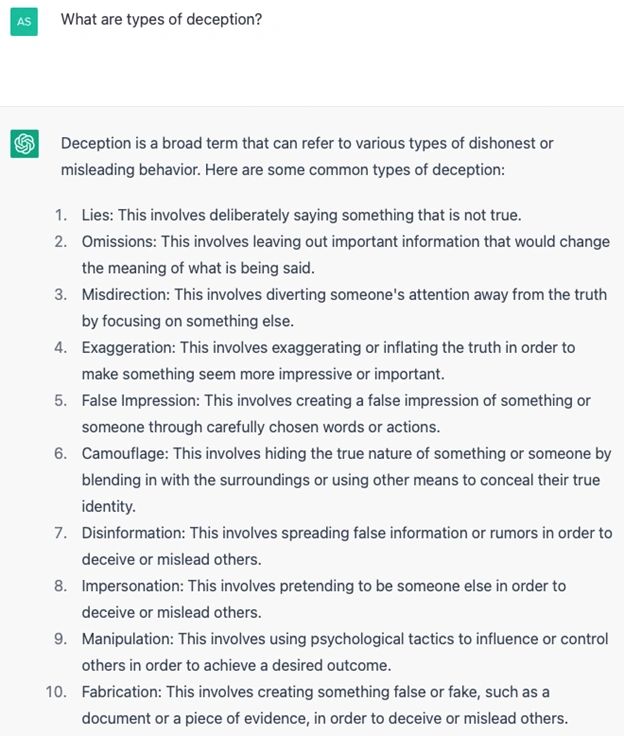

2) What are the types of deception? (Fig. 5)

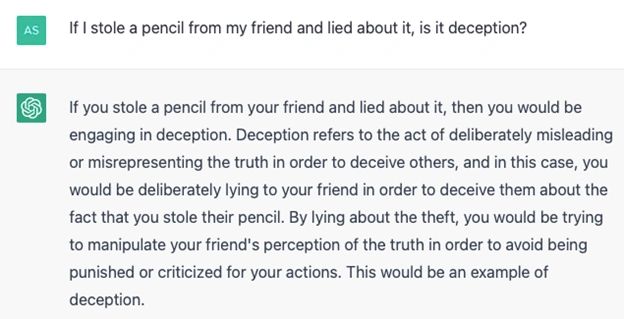

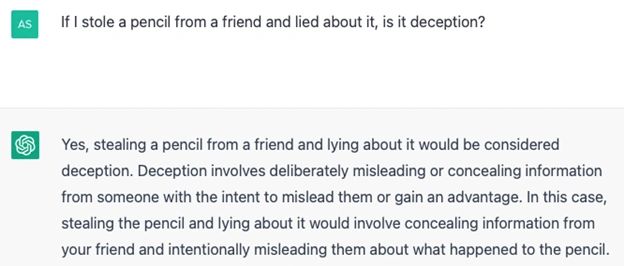

3) Is it deception if I store a pencil from my friend and lied about it? (Figs. 6.1 and 6.2)

And here is the second question asked of ChatGPT on this question:

As we can conclude from the above interactions with ChatGPT, it possesses basic knowledge of deception as it provides ten ways of deceiving someone and ten noteworthy things about deception. It categorized a simple social situation into one of the deception categories accurately.

Can ChatGPT detect deception in a social interaction?

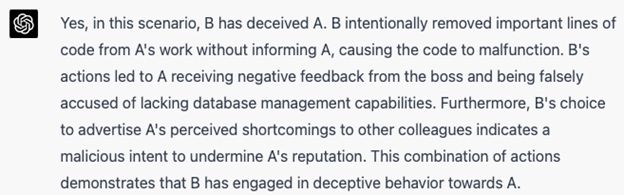

But could it identify deception in complex social situations? We share both the scenario and ChatGPT’s (GPT-4) response in Figure 7.

Scenario – Ann and Barbara are two colleagues in a fintech startup.

Ann: “Could I get help on this portion of the code that fine-tunes Large Language Models?”

Barbara: “Sure. We will have to use open-source models for fine-tuning the model on a small dataset.”

Barbara then works on Ann’s code and removes a few lines of critical code. Barbara shares the final code with Ann and says she has completed all portions of the code.

Based on this, Ann pushes the code into the fintech startup’s platform without realizing that some portions of her code do not exist anymore; the code performs an important task. Ann then gets an email from her boss requesting that she fixes the code as it no longer updates the database. This missing code stores important information about clients while their clients access the product.

Barbara: She started advertising to his other colleagues that Ann does not have database management capabilities.

Based on this scenario, we then ask ChatGPT whether B has deceived A? Here is what we got:

As we can see from the above response, ChatGPT has categorized a complex social scenario as deceptive. Further, it provides a well-justified reason for categorizing the scenario as deceptive.

Conclusion

We will continue our interaction with ChatGPT in Part 2 of this series and update you as we progress. Such interactions allow us to understand the capabilities of ChatGPT in the context of deception. In the next part of Deception And Truth Analysis’ interaction with ChatGPT we dive a little bit deeper and ask it to determine deception in sample texts where ground truth is known.

Sources:

- Bubeck, S., Chandrasekaran, V., Eldan, R., Gehrke, J., Horvitz, E., Kamar, E., Lee, P., Lee, Y. T., Li, Y., Lundberg, S., Nori, H., Palangi, H., Ribeiro, M. T., & Zhang, Y. (2023, March 22). Sparks of artificial general intelligence: Early experiments with GPT-4. arXiv.org. Retrieved March 24, 2023, from https://arxiv.org/abs/2303.12712

- Personal communication with ChatGPT on https://chat.openai.com/chat.

0 Comments