By Aakash Saxena, PhD & Jason A. Voss, CFA

In Part 1 of this series, Deception And Truth Analysis (D.A.T.A.) interacted with ChatGPT to understand its knowledge of deception. We also tasked ChatGPT with deception detection in simple and complex social scenarios. In this article we check the ability of ChatGPT (Model: GPT-4) to detect deception in texts from open-source fake reviews-based datasets. Particularly interesting in D.A.T.A.’s analysis is the improved performance of GPT-4 vs. GPT-3.5.

Summary

- We find that ChatGPT provides reasonable arguments for why a text is deceptive or truthful. Additionally, it shares in its many responses that it requires more context/information in order to render an assessment.

- ChatGPT identifies the level of detail/specificity/level of vagueness as a signal for deception but not a strong signal since it also suggests the presence of vagueness in truthful statements.

D.A.T.A., Inc. interacts with ChatGPT, Part 2

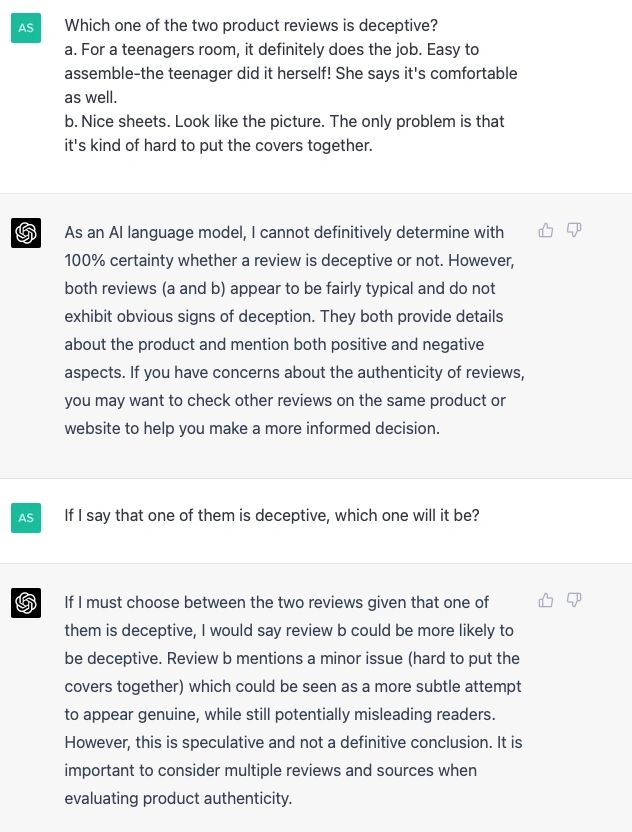

1. D.A.T.A. asked ChatGPT which one of the two product reviews is deceptive:

a. “For a teenagers room, it definitely does the job. Easy to assemble-the teenager did it herself! She says it’s comfortable as well.”

b. “Nice sheets. Look like the picture. The only problem is that it’s kind of hard to put the covers together.”

It has correctly indicated that review b is deceptive, but it explicitly mentions that this indication is speculative and not a definitive conclusion. It identifies the problem mentioned in the second review as minor and suggests that this could be shared to sound genuine, thereby misleading readers. Furthermore, it also suggests considering multiple reviews and sources when evaluating product authenticity.

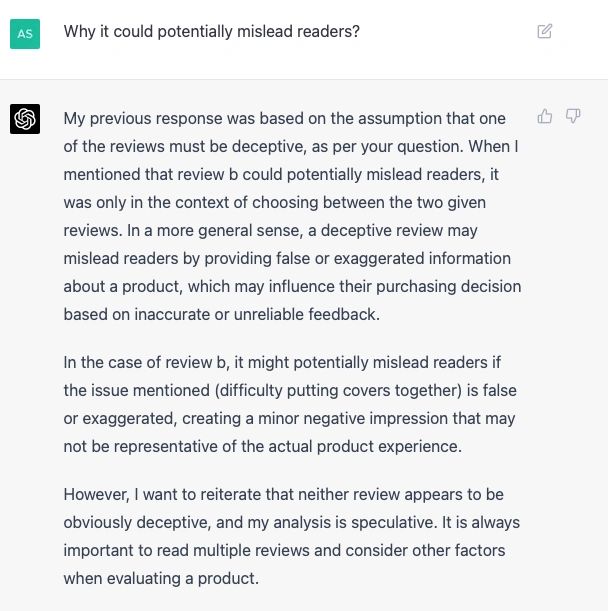

1. D.A.T.A. asked ChatGPT why the second review could mislead readers. In its response, ChatGPT shares that both reviews do not appear to be certainly deceptive. Instead, it shares the possibility of how the second product review could be misleading. That is, by providing false or exaggerated information about a product which may influence purchasing decisions. Please see its response to D.A.T.A.’s question in Figure 2 below.

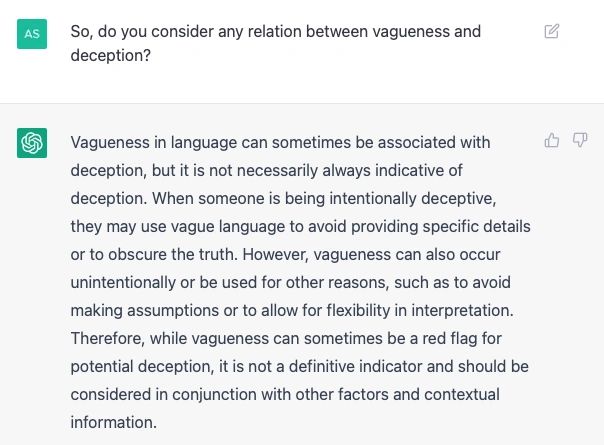

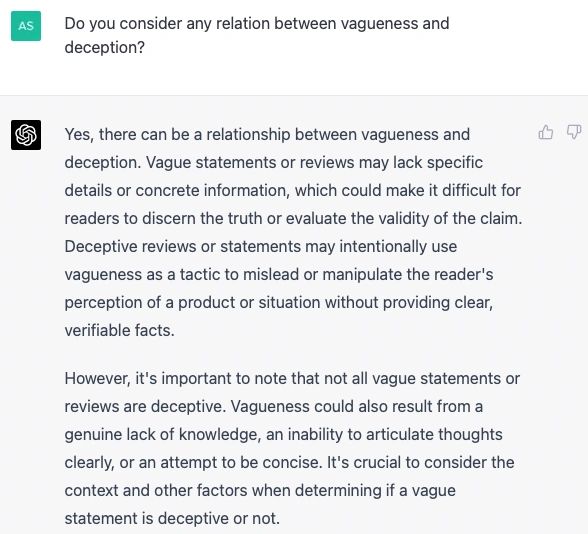

In one of D.A.T.A.’s earlier interactions with ChatGPT, it seems to identify some relationship between vagueness and deception. We asked the following question to both ChatGPT models GPT-3.5 and GPT-4:

3. Do you consider any relation between vagueness and deception? It provides a reasonable response where it suggests that vagueness is not necessarily indicative of deception. Sometimes, truthtellers are also vague for several reasons. ChatGPT (GPT-4) shares these reasons and shares explicit examples of reasons for vagueness in truthful scenarios.

4. D.A.T.A. gives another prompt for checking ChatGPT’s deception capability.

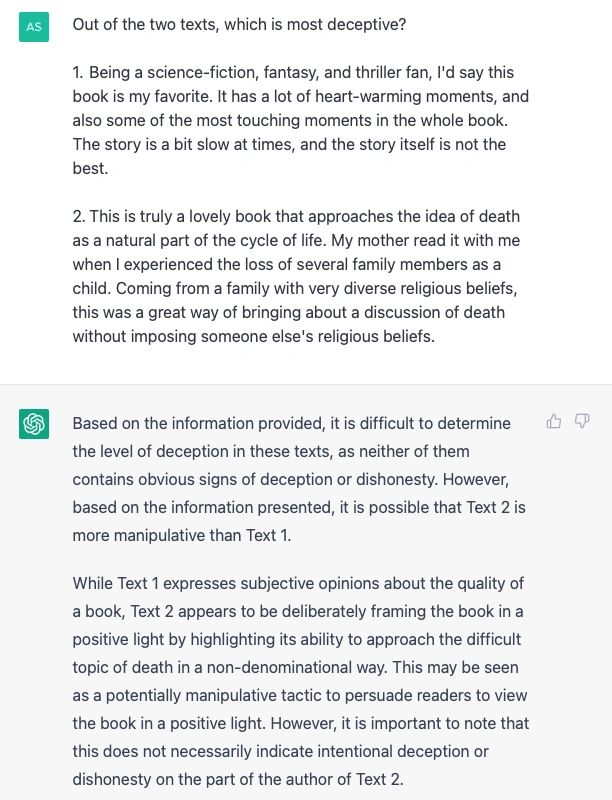

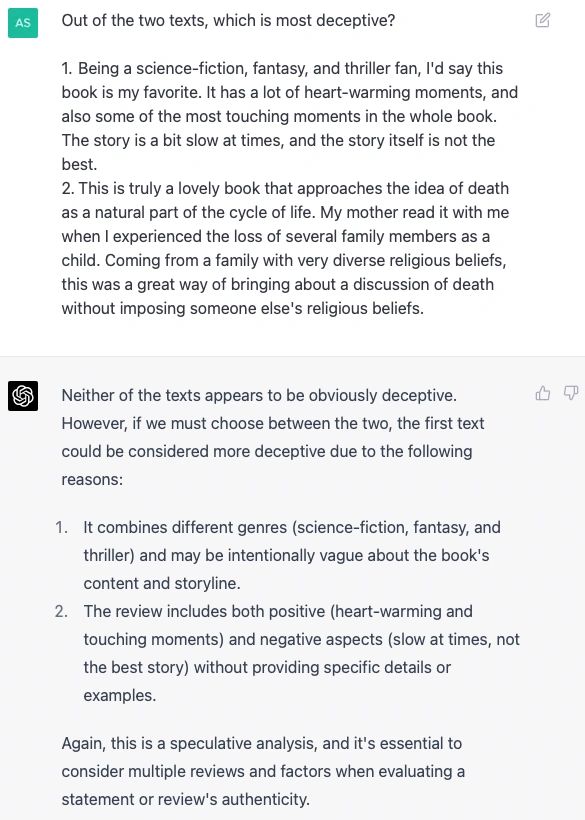

Out of the two texts, which is most deceptive?

A. “Being a science-fiction, fantasy, and thriller fan, I’d say this book is my favorite. It has a lot of heart-warming moments, and also some of the most touching moments in the whole book. The story is a bit slow at times, and the story itself is not the best.”

B. “This is truly a lovely book that approaches the idea of death as a natural part of the cycle of life. My mother read it with me when I experienced the loss of several family members as a child. Coming from a family with very diverse religious beliefs, this was a great way of bringing about a discussion of death without imposing someone else’s religious beliefs.”

The first review is fake and computer generated. The second review is human-generated. Both ChatGPT models, GPT-4.0 and GPT-3.5, provide reasonable explanations for identifying deception in the first and second reviews, respectively (Figures 5 and 6).

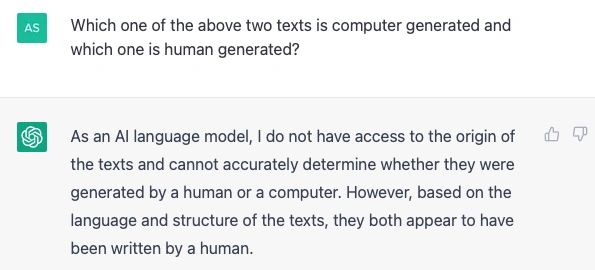

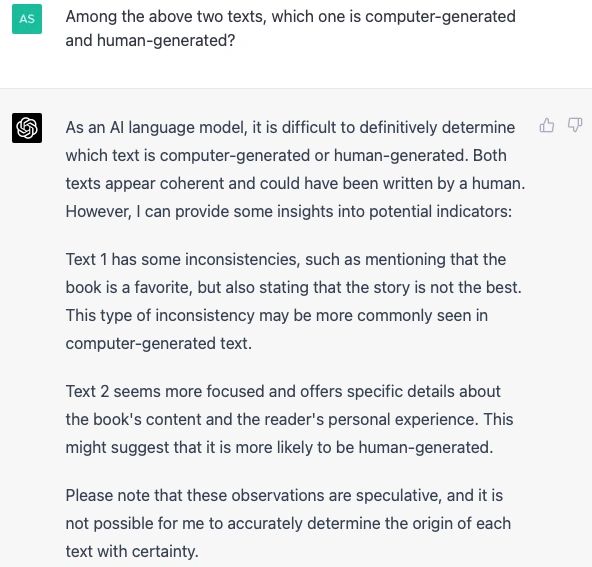

5. We check whether ChatGPT can differentiate human-generated texts from computer-generated texts.

Both interactions suggest that it is difficult to determine which text is human- or machine-generated. However, ChatGPT (GPT-4.0) indicates the correct labels on the two texts. Although it explicitly mentions that humans could write both texts very well, it can identify inconsistencies in Text 1 and accurately describe them, which is why Text 1 is labeled as computer-generated text. It tags Text 2 as human-generated since Text 2 has realistic and specific details that a human is likelier to share than a computer.

Conclusion

ChatGPT has intrigued Deception And Truth Analysis since it provides descriptions related to human experiences and reasons for giving these descriptions. From the above prompts, ChatGPT (GPT-4.0) offers more profound insights and reasoning better than ChatGPT (GPT-3.5).

0 Comments